The recipe app I couldn't build six years ago

Six years ago I helped a CPG company dream up a smart meal planner. We never shipped it. This year, my wife and I built it — and we use it every week.

About six years ago, I was working with a multinational CPG company on what we called "smart meal planning." The idea was compelling: take everything a household knows about itself — dietary restrictions, budget, what's already in the fridge — and generate personalized weekly menus with recipes, nutrition, and a shopping list. Integrate with grocery retailers. Learn preferences over time. The pitch decks were beautiful.

We never shipped it. The personalisation engine alone was a multi-year investment. Recipe generation at scale meant either licensing expensive databases or building a content team. Integrating with grocery store APIs required partnerships that moved at enterprise speed. The project lived in strategy decks and died in roadmap prioritization. A scaled enterprise team, and we couldn't get past planning.

I never stopped thinking about it.

The itch that wouldn't go away

The professional interest was one thing. But the personal need was just as real. Every Sunday, Johanna and I would have the same conversation: what are we eating this week? We'd try to balance nutrition, account for the kids' preferences, avoid buying things we already had, stay within budget, not repeat last week's meals. It would take thirty minutes of flipping between recipe sites and a grocery app, and we'd still end up improvising by Wednesday.

I knew exactly what the product should look like — I'd helped design one. I just couldn't build it.

A different starting point

Earlier this year, we decided to actually build the thing. Not an enterprise product backed by a scaled team and a seven-figure budget. A real app, for our family first, then for other Swedish households — built by the two of us around day jobs and bedtime routines.

The difference between 2020 and 2026 isn't just that the models got smarter. It's that the development workflow changed. I've spent the last few months building a context management system for AI-assisted work — structured .md files as external memory, explicit protocols for maintaining state across sessions, and eventually agent teams where specialized AI agents coordinate through shared files.

Matbotten was the first real stress test of that system on a production codebase.

What we built

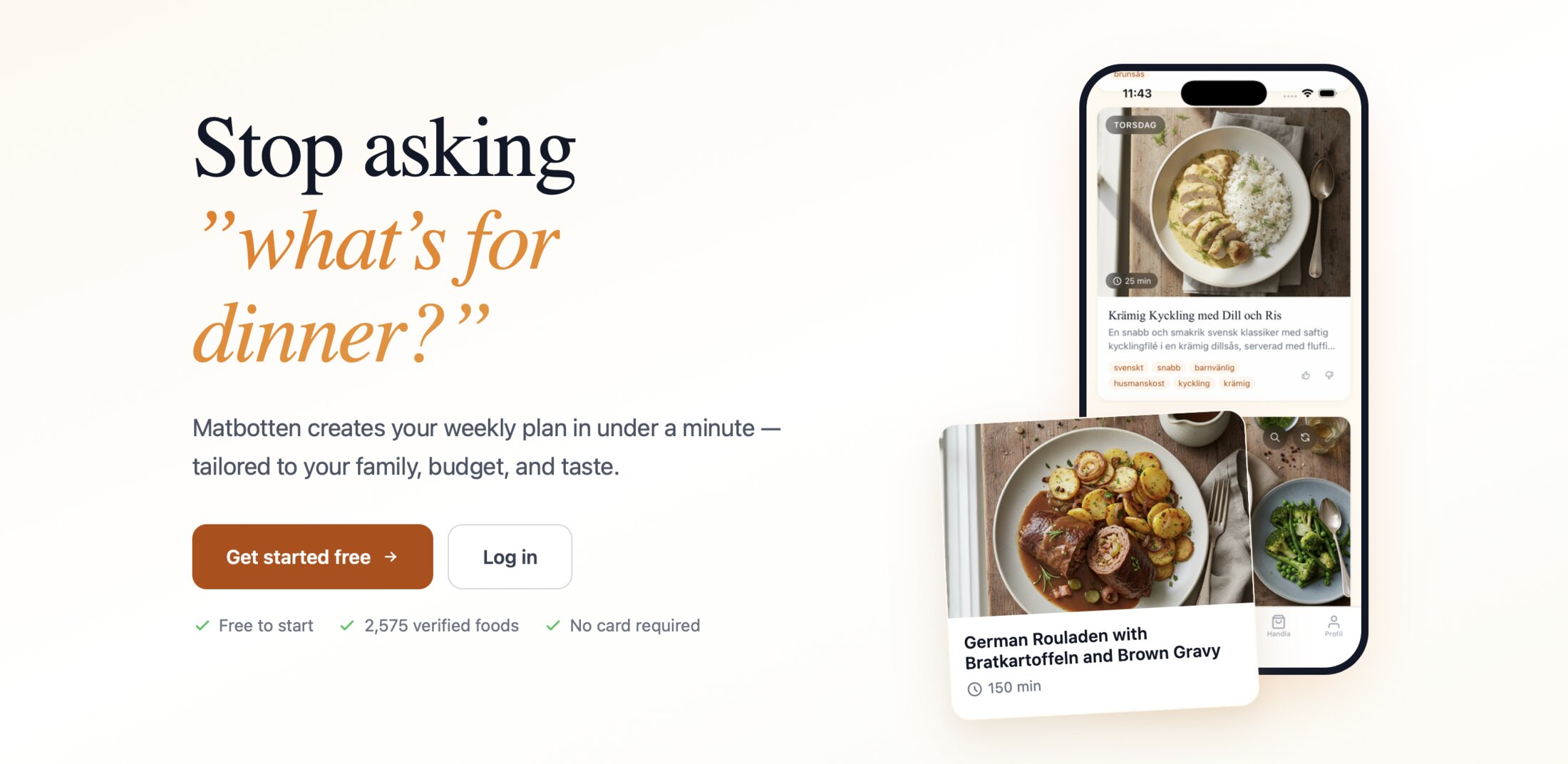

Matbotten is a personalized weekly meal planner for Swedish households. You set up a family profile — dietary needs, favourite cuisines, how much time you want to spend cooking, your budget. Matbotten generates a complete weekly menu with recipes, nutrition breakdowns, and a shopping list that accounts for what you already have in your pantry. Rate meals and it learns what your family likes over time. It integrates with Swedish grocery stores and shopping list apps.

The stack is Express and Handlebars, PostgreSQL for user data and preferences, Google Gemini for recipe generation, and Stripe for payments. 418 automated tests. Live, with paying users, and fast.

For our own household, it replaced that entire Sunday planning ritual. A few minutes setting preferences and checking what's in the pantry, and the week is sorted. Ingredients show up at our door. The thing I'd spent months designing in workshops and strategy sessions — we actually use it now.

Context management at scale

Matbotten has real complexity. Recipe generation is the flashy part, but the hard problems are elsewhere: nutritional balancing across a full week, pantry state management, preference learning from sparse signals, Stripe subscription logic, the dozens of edge cases that emerge when real families use the thing.

This is exactly the kind of project where context rot kills you. By the time you're debugging a Stripe webhook, the AI has forgotten the data model decisions you made for the pantry tracker. By the time you're building the preference engine, the nutritional constraints from three sessions ago are buried under fifty messages about CSS.

The five .md files did the heavy lifting. DECISIONS.md alone saved me from re-litigating the recipe generation approach at least four times — every time the conversation drifted toward "should we use a recipe database instead of generating?", the decision record had the rationale: generation gives us infinite variety, handles dietary restrictions natively, and avoids licensing costs.

TECHNICAL.md captured the API contracts between the meal planner, the recipe generator, and the grocery integration. When building a new feature, Claude could re-read the relevant interfaces instead of reconstructing them from conversation history.

The handover protocol — writing HANDOVER.md at the end of every session — meant I could work on it in 90-minute windows. Kids in bed, two focused sessions, meaningful progress. No "where were we?" warmup. The handover had the answer.

Where agent teams made the difference

Partway through the build, I split from a single Claude session to the Lead / Builder / Validator pattern. The impact was immediate on two fronts.

First, recipe generation quality. The Validator could compare generated recipes against nutritional targets, check that ingredient quantities made sense, and verify that the weekly menu actually hit the dietary balance we promised. Before, I was doing this manually — scanning recipe output and eyeballing macros. The Validator caught things I missed: a week where three dinners were essentially the same protein, a recipe that claimed 20 minutes but had a step that takes 45.

Second, the test suite. 418 tests didn't write themselves over a weekend. The Builder generated tests in batches, the Validator reviewed coverage gaps against the feature spec, and the Lead coordinated which areas needed more testing based on user-facing risk. The cycle was tight: Builder writes a batch, Validator flags gaps, Builder fills them. I made prioritization calls.

The context separation mattered here too. The Builder's window was full of Express route handlers and PostgreSQL queries. The Validator's window was full of test coverage reports and feature requirements. Neither was polluted with the other's concerns.

The CPG knowledge advantage

The part that AI couldn't provide was the domain model. How should a weekly meal plan be structured? What's the right ratio of quick meals to slow-cook nights for a family where both parents work? How do you handle the pantry-to-shopping-list transition without creating waste?

These aren't engineering questions. They're product questions, and the answers came from years of user research in the CPG space. I'd sat in workshops with nutritionists, watched families plan meals in their kitchens, analysed shopping data at a scale most app developers never see.

That domain knowledge became the skeleton that everything else hung on. The AI handled the implementation velocity — translating "the pantry tracker should suggest using items approaching expiry" into actual code. But knowing that feature mattered, and knowing how users think about their pantry, came from a previous life.

This is the part that gets lost in the "AI will replace developers" discourse. The models are extraordinary at implementation. They're getting better at architecture. But product judgment — knowing what to build and why — still comes from having been close to the problem. The AI made me faster. The CPG experience made me right.

From dream to dinner

Six years ago: a scaled enterprise team, partnership negotiations with grocery chains, months of planning. The project stalled.

This year: structured AI collaboration, domain knowledge from a previous career, and a family that was tired of the Sunday planning ritual. The product is live — and we eat from it every week.

I don't think this is just a story about AI making development faster. It's about how the barrier to shipping real products has fundamentally shifted. The constraint used to be engineering capacity — you needed a team to build something this complex. Now the constraint is product knowledge and context discipline. Knowing what to build, and maintaining enough coherence across sessions to actually finish it.

The context management system I've been writing about wasn't an academic exercise. It was the scaffolding that let us build something that used to require a department. The .md files, the handover protocol, the agent teams — they're not theoretical. They're how Matbotten went from "remember that idea we had?" to a working product that plans our meals every week.

If you've been sitting on a product idea because the engineering lift felt too heavy — the lift is lighter now. The hard part was always knowing what to build. If you have that, the tools are ready.